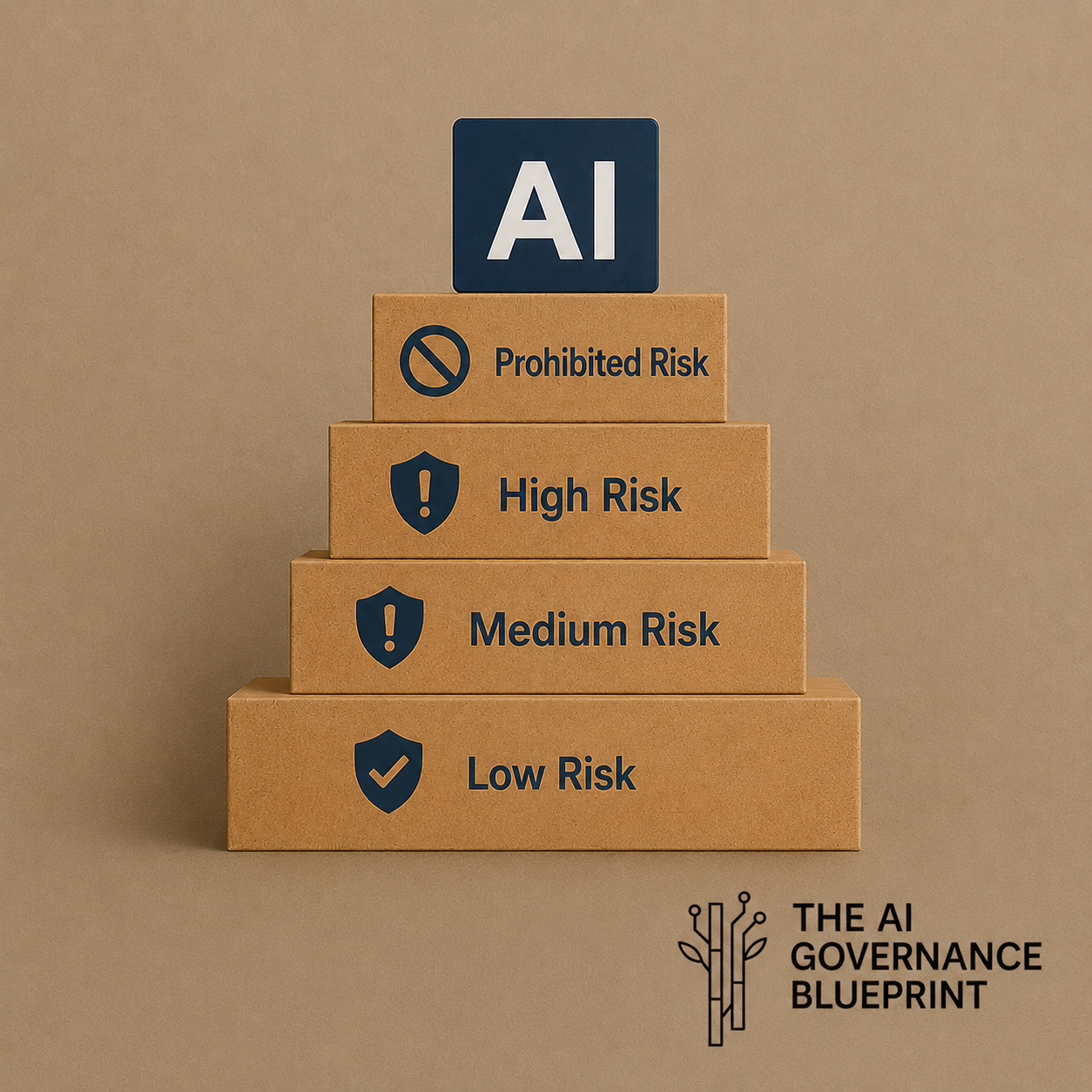

AI Risk Tiering: Not All AI Is Equal

Classifying AI for Risk-Based Control Mapping in the Enterprise

The Way AI Risk Actually Shows Up

Across organizations, AI risk is discussed in abstractions - ethics, bias, transparency accountability. On the ground, it shows up very differently. It shows up when an employee pastes customer complaints into a public model to save time. When a product team fine-tunes a model on historical transaction records without realizing those records contain inferred sensitive attributes. When Legal discovers, after the fact, that a vendor has been retaining prompts for "service improvement" under a clause buried in the terms of service.

The uncomfortable truth is that most AI risk incidents are not caused by malicious intent or broken models. They are caused by misclassification - treating materially different AI deployments as if they carry the same level of risk. And misclassification happens not because organizations are careless, but because they have not built the infrastructure to distinguish between a scheduling assistant and a customer intelligence engine, between a grammar tool and an automated underwriting system.

AI risk tiering is the first governance decision that survives contact with reality. This is what makes every downstream decision about data, controls, approvals, and vendors coherent and defensible.

Without it, organizations fall into one of two failure modes: blanket prohibition that drives AI into the shadow realm, or unchecked enthusiasm that outpaces control.

The First Mistake: Asking the Wrong Question

We have seen organizations roll out enterprise AI policies that read well and audit poorly. The reason is almost always the same - they treat AI as a single risk category rather than as what it actually is - a delivery mechanism for data, decisions, and automation, each of which carries wildly different risk implications depending on context.

When organizations ask, “Is this model secure?” or “Did the vendor pass review?” they are orbiting the real issue. AI risk does not sit in the model. It sits in the use case, in the combination of:

Data being processed

Decisions being influenced

People being affected

Accountability surrounding the system

One global financial services firm classified all generative AI as high risk and required privacy, legal, and security sign-off for every use case without exception. Within three months, business teams were accessing AI through personal accounts and consumer browser tools to meet deadlines. The governance program had not reduced risk. It had displaced it into places with zero visibility, zero controls, and zero accountability. This is the failure mode that risk tiering is specifically designed to prevent. When governance cannot distinguish between a low-stakes tool and a consequential decision system, it becomes either an obstacle or a fiction, - and determined business units will make it the latter.

Use Case Intake: Where Classification Happens or is Lost

The most important control in AI governance is not a policy, a vendor questionnaire, or a monitoring dashboard. It is use case intake discipline, which involves an organization asking questions such as:

Is this AI producing an analysis or outcome that may impact legal rights?

Is this AI informing a human decision, or acting in place of one?

Is the data processed transiently, or does it persist in vendor logs?

Is the impact of an error reversible, or does harm crystallize before anyone can intervene?

Who is accountable if it fails?

These questions do not surface from a single stakeholder filling up a form. They surface from a structured conversation, led by someone with enough cross-functional judgment to probe beneath the business owner's description of their own tool.

Consider what happened inside one mid-market firm. A sales enablement team deployed an AI assistant to summarize customer call transcripts. At intake, it was classified as an internal productivity tool with no significant data considerations, low risk, Tier 1. Six months later, those summaries were being automatically fed into CRM records that triggered renewal and upsell recommendations to relationship managers. What began as Tier 1 had become Tier 3 through ordinary organizational behaviour, with zero reassessment along the way. No system was breached, nor any policy violated.

The classification was simply never revisited. This is not a technology failure or a tooling gap. It is a classification failure at intake and a change management failure thereafter, and it is the most common AI governance failure pattern in practice.

Risk Is Not Static and That’s Where Governance Breaks

Even when classification is done correctly at deployment, most programs fail the next step: they assume it holds when it doesn’t.

AI risk evolves continuously:

Volume scales from hundreds to hundreds of thousands of interactions

Outputs get reused in new workflows

Systems expand into new decisions

Vendors change terms or retention practices

Regulations shift underneath existing deployments

A system does not need to change technically for its risk profile to change materially.

An HR chatbot that initially answered policy questions was correctly classified at Tier 2. Over time, it began suggesting performance actions based on patterns in historical case data. No one paused to re-tier it. The organization discovered the shift during an internal audit, after the system had been operating outside its assessed risk profile for months. This is tier drift, and it is nearly universal in successful AI deployments precisely because successful tools attract new use cases organically.

Risk changes when access expands, when vendors update their terms, when models are swapped out beneath the same interface, when data sources shift, and when outputs begin feeding downstream processes they were never assessed for. Volume is particularly underappreciated: a use case that processes a few hundred interactions per week carries a qualitatively different exposure than the same system at hundreds of thousands. Tier assignments without recertification schedules and change-triggered reassessment are historical records, not governance.

Any of the following should trigger an immediate classification re-assessment:

a material change to data inputs or addition of data sources,

a significant expansion in user population or interaction volume,

a change to the vendor AI’s infrastructure or terms,

AI outputs beginning to feed a new external or downstream workflow,

a relevant breach incident or near-miss,

a new regulatory guidance that affects the use case's legal context.

In practice, these triggers do not surface on their own. Re-classification only happens when it is tied to existing organizational gates and clear ownership. Mature programs anchor AI tier change triggers to events where such change may occur i.e. access requests, data source additions, model or prompt updates, production releases, and vendor or contract updates. These events automatically prompt a simple re-tiering check by a named owner, typically the product or system owner. When tier reassessment is treated as part of normal change management rather than a separate governance activity - misclassification is caught early, before it becomes visible through audits, incidents, or regulatory review.

The Real Risk Is Still the Data but Now It Moves Differently

Every experienced privacy and security leader understands that data drives risk. AI does not change that principle. It multiplies the number of ways data can escape its original context, often invisibly and often without any actor intending harm. In a conventional IT environment, data sits in databases and moves through defined pipelines. In AI systems, data is copied into prompts, transformed into embeddings, re-expressed in outputs that carry real world impact, logged or used by vendors, and sometimes reused for model training, explicitly under the terms of service, or implicitly as behavioural signal. The data lifecycle in AI is radically less legible than in traditional processing environments, and teams that do not account for this underestimate their exposure.

In one enterprise, engineers troubleshooting a generative AI integration pasted production error logs containing API tokens and customer identifiers into a third-party model. No system was breached. The action felt informal and transient to the people doing it. Yet regulated data left the organizational environment in a way that potentially met breach notification thresholds.

In another case, a compliance team used AI to draft internal investigation summaries. Those drafts were later appended to case files and retained indefinitely. No one had assessed the AI's outputs as confidential regulated records with their own retention and lifecycle obligations as the data that produced them.

Four Dimensions That Determine Risk Tier for a Use Case

Effective AI risk assessment does not require complexity. It requires focus on the right dimensions. We consistently use the following four dimensions to determine whether an organization is managing an operational convenience or a system that demands serious governance infrastructure.

Impact severity asks not whether the AI can be wrong, but what happens when it is. A mis-summarized internal memo is bearable noise. A miscategorized customer complaint that suppresses escalation is a credible risk. The question is: what is the worst realistic outcome of a systematic error, and who bears it?

Decision influence is where AI risk is most consistently underestimated. Advisory systems feel safe until humans begin to rely on them implicitly. Autonomy does not announce itself; it emerges gradually through trust, convenience, and the compounding of small dependencies. A model that flags elevated fraud risk, even when a human nominally makes the final call, is functionally making a consequential determination if the override rate is negligible, and the downstream effect is service restriction. Human presence in a process is not itself a control. Human judgment, with the authority and information to meaningfully intervene, is.

Data sensitivity in context requires looking beyond input classification to inferential output. A system processing names, postal codes, and transaction frequency, none of which is classified as sensitive in isolation, can produce inferences about income, household composition, and health conditions with meaningful accuracy. If the governance framework evaluates only what goes into the model without considering what the model can infer or generate, it is measuring the wrong thing.

Exposure and scale are the dimensions that most governance programs fail to revisit after initial classification. A tool used by five analysts behaves very differently when rolled out to fifteen thousand employees or exposed to customers. Risk compounds with adoption and reach. The blast radius of a biased output pattern, a systematic error, or a data leakage event scales directly with the number of interactions.

| AI Risk Tier | What This Means in Practice | Use Cases | Governance/Control Expectation |

|---|---|---|---|

| Tier 1 Minimal Risk | AI is used for internal productivity or assistance and does not materially influence decisions, affect individuals, or involve personal or sensitive data. Outputs are reversible, errors are local, and impact is limited to efficiency or quality of work. | Internal drafting and summarization, code assistance, meeting notes, internal Q&A over non-sensitive content. | Lightweight Governance: classification, named business owner, approved tools, clear acceptable-use guidance (including data boundaries), and basic logging. No formal privacy or AI impact assessment required. |

| Tier 2 Moderate Risk | AI informs internal decisions or workflows using business data, but human judgment remains meaningful and outcomes have limited external or rights-based impact. Errors may influence decisions but are generally detectable and correctable. | Internal knowledge search, HR policy assistants, marketing content generation using customer segments, financial planning support tools (non-decisional). | Requires clear data handling rules, vendor diligence, and periodic re-tiering. Targeted privacy or security review may be required depending on data, but full PIAs or AI impact assessments are not default. |

| Tier 3 High Risk | AI materially influences or enables decisions that affect customers, employees, or regulated populations, or processes personal, sensitive, or inferential data in ways that create plausible harm if the system fails, biases emerge, or data is misused. | Customer service AI affecting outcomes, recruitment screening, fraud detection, credit or underwriting support, HR performance insights, compliance investigation drafting. | Full governance stack required: AI impact assessment, PIA/DPIA where applicable, bias and discrimination evaluation, explainability and accountability documentation, defined human-in-the-loop controls, monitoring, and incident response integration. |

| Tier 4 Prohibited Risk | AI use cases that are unlawful, violate fundamental rights, or cannot be made compliant through reasonable controls. Risk is not meaningfully mitigable through governance or technical measures. | Social scoring, manipulative or deceptive AI targeting vulnerable groups, disallowed biometric or surveillance uses, AI systems prohibited under applicable law. | Deployment is not permitted. Requires legal escalation, executive awareness, and immediate cessation if identified. |

Three Points about this structure are worth stating plainly.

The tier belongs to the use case, not the model, not the vendor, and not the department.

The same SaaS platform can occupy different tiers in different deployments within the same organization, and that is governance working correctly, not a contradiction. A model can be a tier 1 productivity tool in one context and tier 3 regulated decision system in another.

Tier 3 represents the highest level of risk that can be managed through controls. These systems should be deployed but with governance rigor proportionate to their impact. Tier 4 is categorically different. It represents use cases that are unlawful, rights-violating, or otherwise non-permissible. At this boundary, governance is no longer about mitigation, it is about prohibition.

Control Mapping: Proportional or It Fails

The fastest way to destroy a functioning AI governance program is to demand Tier 3 controls for Tier 1 use cases. Teams comply once then route around the process permanently. The program loses credibility exactly where it needs it most. Conversely, under-controlling Tier 3 systems is how organizations end up defending the indefensible in front of regulators and boards. And failing to identify Tier 4 use cases at all is how prohibited risk enters the organization under the guise of innovation.

Over-controlling low-risk AI kills governance. Under-controlling high-risk AI kills credibility.

Proportionate control mapping means that Tier 1 moves quickly with lightweight oversight i.e. a named owner, an acceptable use policy, and basic logging. Tier 2 adds privacy and vendor diligence without requiring a full impact assessment. Tier 3 requires the complete governance stack: AI Impact Assessment, PIA, bias/discrimination evaluation, explainability documentation, defined human review protocols, organizational accountability, adversarial testing, continuous monitoring and incident response integration. Tier 4 is not subject to control scaling, rather it is subject to exclusion. These use cases must not be deployed and require legal escalation if identified. In short, the rigor of the control must match the severity of the potential harm.

The structural fix is making intake and tier assignment a mandatory gate in the project approval and procurement process, equal in standing to budget authorization. The cultural fix is harder and more important, because AI governance functions that sit outside the flow of development and deployment will always be catching up to what the business has already done.

The only version of AI governance that genuinely holds under scrutiny is one embedded in the people who build, buy, and operate AI. For example, where a data scientist recognizes when a model crosses a tier boundary, where a product owner owns the accuracy of the tier assessment over time, and where procurement understands that vendor selection is not the same thing as use case governance.

If you cannot explain clearly why one AI system in your organization is Tier 1 and another is Tier 3, you do not have AI governance.

The Next Question This Exercise Always Surfaces

Every rigorous AI risk tiering exercise arrives at the same inflection point. The tier is assigned, the controls are mapped, and the business owner asks the question they have not properly thought through before

“Can we actually put this data into this AI system?”

What inputs are appropriate for the prompt? What can be used for fine-tuning? What retention obligations apply to what the model has already processed?

These are not simple questions, and organizations that treat them as obvious may be taking on risks they have not properly scoped. The questions that determine the data boundaries for an AI system (what data is safe to use, and under what conditions) is the subject of the next article in this series.

If risk tiering tells you how much scrutiny a use case deployment demands, data boundary for AI systems tell you whether the inputs that deployment requires should be permitted at all. Together, they form the operational core of an AI governance program that holds up when it matters.

Frequently Asked Questions

-

AI risk tiering is the process of classifying AI use cases into distinct risk levels based on the severity of potential harm, the degree to which the system influences decisions, and the nature of data they process. It matters because, without tiering, organizations apply disproportionate controls relative to harm. Treating all AI deployments as equal, whether a scheduling assistant or an automated underwriting system, leads to either blanket prohibition that drives AI underground, or unchecked adoption that outpaces control. Risk tiering is the foundational governance decision that makes every downstream choice about data, controls, approvals, and vendors coherent and defensible.

-

To classify AI use cases by risk level, evaluate four dimensions: impact severity (what is the worst realistic outcome of a systematic error), decision influence (whether AI meaningfully shapes or replaces human judgment), data sensitivity (including what can the system can infer or generate, not just what it ingests), and exposure and scale (how widely errors or misuse would propagate and how many people are affected if something goes wrong). Classification belongs to the use case, not the model, vendor, or the department, and must be reassessed whenever the use case materially changes.

-

Misclassifying an AI system as low-risk means it operates without the controls proportionate to its actual harm potential. In practice, this creates three compounding problems: the system is deployed without proper privacy, bias or accountability review; outputs begin feeding consequential decisions that were never assessed; and by the time the misclassification is discovered, through an audit, incident, or regulatory review, harm has already materialized and may be irreversible. Misclassification also undermines the organization’s ability to demonstrate due diligence, increasing regulatory, legal, and accountability exposure. Most AI risk incidents are not caused by malicious intent or broken models. They are caused by misclassification.

-

An AI system is high-risk when it scores significantly on at least one of four dimensions: impact severity (where errors cause harm that is difficult or impossible to reverse), decision influence (where the system materially shapes outcomes for customers, employees, or regulated populations even when a human nominally approves), data sensitivity (where the system processes or infers personal, sensitive, or protected attributes), and scale (where volume of interactions amplifies the blast radius of any error or bias). While no single dimension should be assessed in isolation, severity in any single dimension may be sufficient to elevate a system to high risk. Governance programs that evaluate only one miss the compounding effect of the others.

-

Tier drift occurs when an AI system's risk profile increases materially after deployment, through expanded data inputs, higher interaction volumes, new downstream workflows, or vendor term changes, without triggering a corresponding reassessment of its risk classification. It is common in successful AI deployments precisely because useful tools attract new use cases organically. Prevention requires anchoring reassessment triggers to existing organizational change events: access requests, data source additions, model or prompt updates, production releases, and vendor contract renewals. Responsibility for reassessment should sit with a named system or product owner, supported by built-in governance workflow, rather than relying on ad hoc review.

-

Every AI use case requires documented risk classification, but not every use case requires the same level of governance review. The quickest way to create a dysfunctional AI governance program is to demand high-risk controls for low-risk systems. Teams comply once, then route around the process permanently. Proportionate governance means Tier 1 systems move quickly with lightweight oversight: a named owner, an acceptable use policy, and basic logging. Only Tier 3 systems require the full governance stack, including impact assessments, bias evaluation, explainability documentation, and continuous monitoring. The rigor of the control must match the severity of the potential harm.

-

Employees bypass AI governance policies when those policies cannot distinguish between a low-stakes productivity tool and a consequential decision system and respond by treating both identically. When every AI use case requires the same level of approval, review, and sign-off regardless of risk, business teams facing real deadlines will access AI through personal accounts and consumer tools with zero organizational visibility or control. Overly restrictive governance does not reduce AI risk. It displaces it into places where visibility and accountability do not exist. Risk tiering solves this by making governance proportionate, fast for low-risk and rigorous for high-risk.

-

Prohibited AI use cases, classified as Tier 4, are those that are unlawful, violate fundamental rights, or cannot be made compliant through reasonable technical or governance measures. Examples include social scoring systems that evaluate individuals based on behavior or personal characteristics, AI designed to manipulate vulnerable groups through deceptive techniques, disallowed biometric or surveillance applications, and systems explicitly prohibited under applicable law such as the EU AI Act's Article 5. At this boundary, governance is no longer about mitigation. It is about prohibition. Prohibited use cases require legal escalation, executive awareness, and immediate cessation if identified in operation.

-

An AI system's risk classification must be reassessed immediately when any of the following occurs: a material change to data inputs or addition of new data sources; a significant expansion in user population or interaction volume; a change to the vendor's AI infrastructure or terms of service; AI outputs beginning to feed a new external or downstream workflow; a relevant breach incident or near-miss; or new regulatory guidance that affects the use case's legal context. A system does not need to change technically for its risk profile to change materially. Volume alone, scaling from hundreds to hundreds of thousands of interactions, represents a qualitatively different exposure. Many organizations also require periodic, time-based reassessment to ensure classifications remain current even in the absence of obvious change.

-

Human-in-the-loop (HITL) means a human reviews, approves or can override AI outputs before they produce consequences. As a governance control, it is only meaningful when the human reviewer has the authority, information, and practical capacity to meaningfully intervene, not merely nominal sign-off in a workflow. HITL is not enough when override rates are negligible, when reviewers lack the context to evaluate AI outputs critically, or when volume makes genuine review operationally impossible. In such cases, the AI system is functionally determining outcomes, regardless of nominal human approval. Human presence in a process is not itself a control: human judgment with real agency is.